112 Downloads Updated 3 months ago

Hunyuan Translation Model Version 1.5(HY-MT)

ollama run sun_leaf/HY-MT

Models

View all →Readme

中文 | English

🤗 Hugging Face | ModelScope |

🖥️ Official Website | 🕹️ Demo

Model Introduction

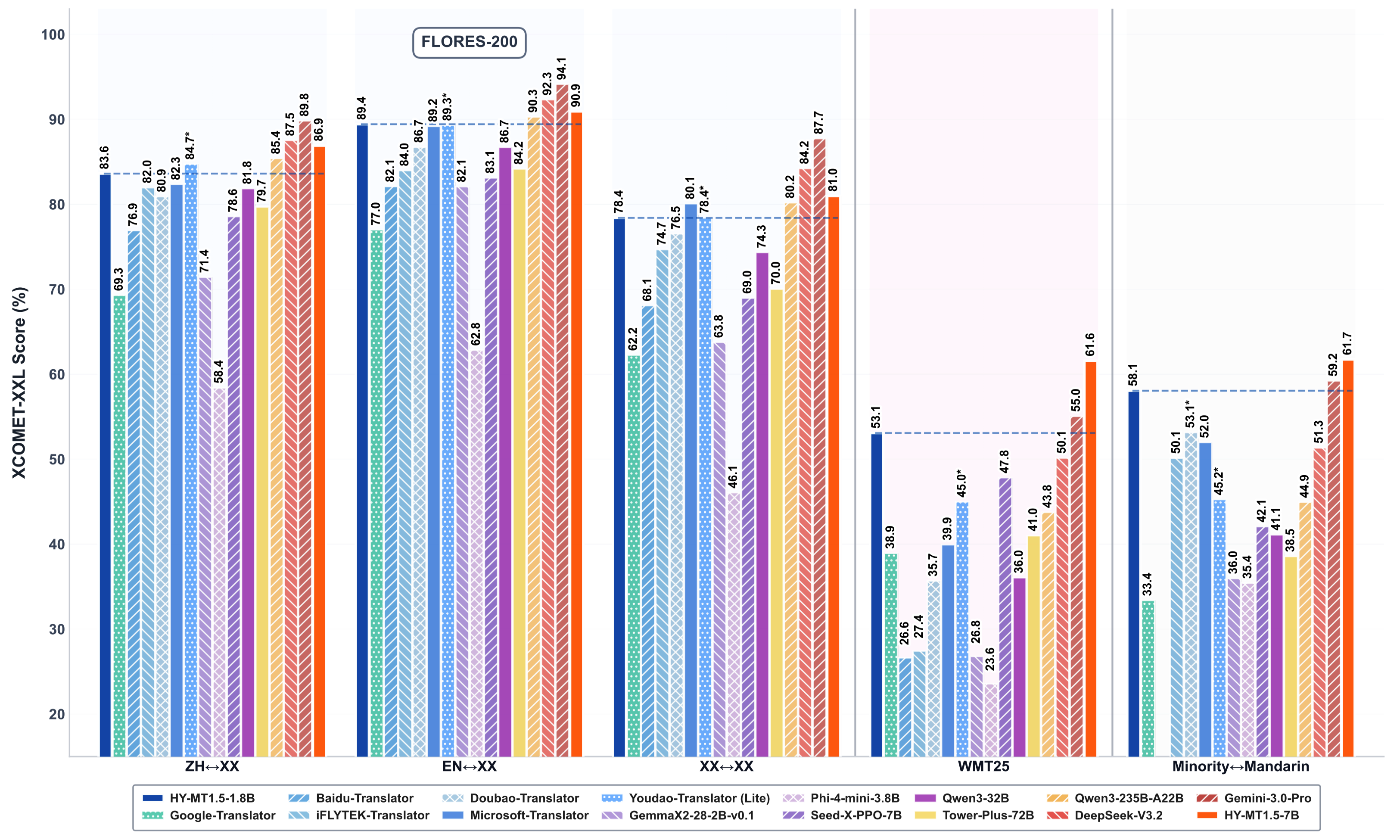

Hunyuan Translation Model Version 1.5 includes a 1.8B translation model, HY-MT1.5-1.8B, and a 7B translation model, HY-MT1.5-7B. Both models focus on supporting mutual translation across 33 languages and incorporating 5 ethnic and dialect variations. Among them, HY-MT1.5-7B is an upgraded version of our WMT25 championship model, optimized for explanatory translation and mixed-language scenarios, with newly added support for terminology intervention, contextual translation, and formatted translation. Despite having less than one-third the parameters of HY-MT1.5-7B, HY-MT1.5-1.8B delivers translation performance comparable to its larger counterpart, achieving both high speed and high quality. After quantization, the 1.8B model can be deployed on edge devices and support real-time translation scenarios, making it widely applicable.

Key Features and Advantages

- HY-MT1.5-1.8B achieves the industry-leading performance among models of the same size, surpassing most commercial translation APIs.

- HY-MT1.5-1.8B supports deployment on edge devices and real-time translation scenarios, offering broad applicability.

- HY-MT1.5-7B, compared to its September open-source version, has been optimized for annotated and mixed-language scenarios.

- Both models support terminology intervention, contextual translation, and formatted translation.

Related News

- 2025.12.30, we have open-sourced HY-MT1.5-1.8B and HY-MT1.5-7B on Hugging Face.

- 2025.9.1, we have open-sourced Hunyuan-MT-7B , Hunyuan-MT-Chimera-7B on Hugging Face.

Performance

Model Links

| Model Name | Description | Download |

|---|---|---|

| HY-MT1.5-1.8B | Hunyuan 1.8B translation model | 🤗 Model |

| HY-MT1.5-1.8B-FP8 | Hunyuan 1.8B translation model, fp8 quant | 🤗 Model |

| HY-MT1.5-1.8B-GPTQ-Int4 | Hunyuan 1.8B translation model, int4 quant | 🤗 Model |

| HY-MT1.5-1.8B-GGUF | Hunyuan 1.8B translation model, llama.cpp | 🤗 Model |

| HY-MT1.5-7B | Hunyuan 7B translation model | 🤗 Model |

| HY-MT1.5-7B-FP8 | Hunyuan 7B translation model, fp8 quant | 🤗 Model |

| HY-MT1.5-7B-GGUF | Hunyuan 7B translation model, llama.cpp | 🤗 Model |

Prompts

Prompt Template for ZH<=>XX Translation.

将以下文本翻译为{target_language},注意只需要输出翻译后的结果,不要额外解释:

{source_text}

Prompt Template for XX<=>XX Translation, excluding ZH<=>XX.

Translate the following segment into {target_language}, without additional explanation.

{source_text}

Prompt Template for terminology intervention.

参考下面的翻译:

{source_term} 翻译成 {target_term}

将以下文本翻译为{target_language},注意只需要输出翻译后的结果,不要额外解释:

{source_text}

Prompt Template for contextual translation.

{context}

参考上面的信息,把下面的文本翻译成{target_language},注意不需要翻译上文,也不要额外解释:

{source_text}

Prompt Template for formatted translation.

将以下<source></source>之间的文本翻译为中文,注意只需要输出翻译后的结果,不要额外解释,原文中的<sn></sn>标签表示标签内文本包含格式信息,需要在译文中相应的位置尽量保留该标签。输出格式为:<target>str</target>

<source>{src_text_with_format}</source>

Quantization Compression

We used our own AngelSlim compression tool to produce FP8 and INT4 quantization models. AngelSlim is a toolset dedicated to creating a more user-friendly, comprehensive and efficient model compression solution.

FP8 Quantization

We use FP8-static quantization, FP8 quantization adopts 8-bit floating point format, through a small amount of calibration data (without training) to pre-determine the quantization scale, the model weights and activation values will be converted to FP8 format, to improve the inference efficiency and reduce the deployment threshold. We you can use AngelSlim quantization, you can also directly download our quantization completed open source model to use AngelSlim.