108 Downloads Updated 2 years ago

🐳 Aurora represents the Chinese version of the MoE model, refined from the Mixtral-8x7B architecture. It adeptly unlocks the model’s potential for bilingual dialogue in both Chinese and English across a wide range of open-domain topics.

ollama run wangrongsheng/aurora

Models

View all →Readme

Overview

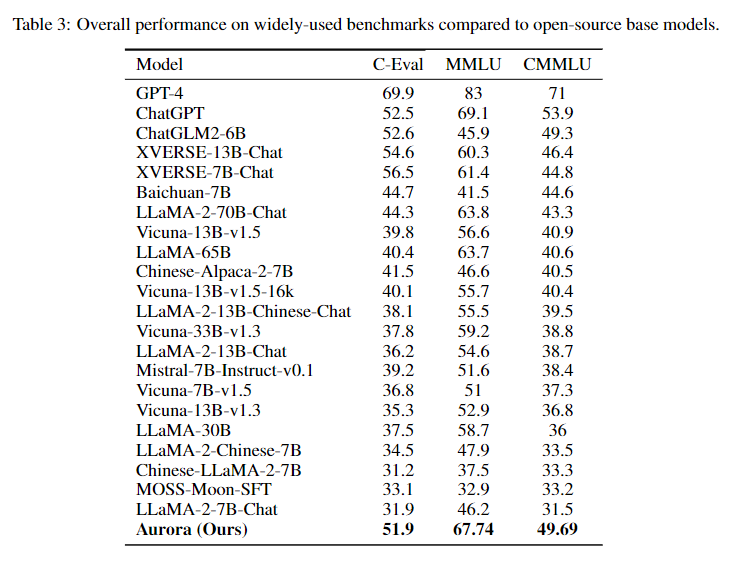

Aurora represents the Chinese iteration of the MoE model, refined from the Mixtral-8x7B architecture. It adeptly unlocks the model’s potential for bilingual dialogue in both Chinese and English across a wide range of open-domain topics. Our Github is https://github.com/WangRongsheng/Aurora!

Run Aurora model

ollama run wangrongsheng/aurora

# or

# single-round dialogue

ollama run wangrongsheng/aurora "What is your favourite condiment?"

Check Aurora model

ollama ls

wangrongsheng/aurora will be listed with other models.

Remove Aurora model

ollama rm wangrongsheng/aurora

Use by API

curl -X POST http://localhost:11434/api/generate -d '{

"model": "wangrongsheng/aurora",

"prompt":"What is your favourite condiment?"

}'

Citation

If you find our work helpful, feel free to give us a cite.

@misc{wang2023auroraactivating,

title={Aurora:Activating Chinese chat capability for Mixtral-8x7B sparse Mixture-of-Experts through Instruction-Tuning},

author={Rongsheng Wang and Haoming Chen and Ruizhe Zhou and Yaofei Duan and Kunyan Cai and Han Ma and Jiaxi Cui and Jian Li and Patrick Cheong-Iao Pang and Yapeng Wang and Tao Tan},

year={2023},

eprint={2312.14557},

archivePrefix={arXiv},

primaryClass={cs.CL}

}